Artificial intelligence (AI) face recognition is a cutting-edge technology changing how we identify people in images or videos. It’s based on Computer Vision, which includes powerful Deep learning and Image processing methods.

These come together in a set of algorithms that work together seamlessly, making it effortless for algorithms to identify people in videos or still images.

This technology is at the forefront of AI applications and is an advanced form of biometric authentication. So, what are its use cases, and are there any bad things about it?

Let’s talk a bit more about AI face recognition and how this is going to affect you. Privacy may be a thing of the past because of the rapid growth of these technologies.

What is facial recognition?

Facial recognition is a type of biometric software that basically takes your face and creates a faceprint by mapping out your facial features. This magic is done using deep learning algorithms.

Then, when you need to be identified, the system compares your live mugshot or a digital pic to this stored faceprint to make sure it’s really you.

Now, when we mix face recognition with Artificial Intelligence (AI), things get even more, let’s say interesting. AI uses computer vision technology (CNN) to identify people or objects in photos or videos. This involves many techniques like deep learning, computer vision algorithms, and image processing.

You’ve probably seen facial recognition in action when unlocking your phone or at the door of some high-tech office. It’s also used for passport authentication at airports, in various security systems, and even in the medical field. Some smart systems can even figure out how you feel just by looking at your face!

In 2021, there was a big jump in money flowing, and lots of venture funding went into facial recognition start-ups, showing that people are betting big on this tech.

It’s opening up new possibilities in advertising, healthcare, security, and even ensuring students pay attention during online exams.

History of Face Recognition

Face recognition started back in the early 1990s when the Eigenface led the way for what we know as face recognition today. In the 90s and 2000s, holistic approaches were all the rage.

They tried understanding faces by assuming they fit into mathematical spaces like linear subspaces. But they weren’t great at dealing with the unpredictable ways faces can change.

In the 2000s, local feature-based face recognition started to make some noise. Techniques like Gabor filters and Local Binary Patterns (LBP) stepped up, trying to make sense of faces piece by piece. But, these features had their problems – they lacked something special to make them stand out and were a bit too bulky.

In the early 2010s, learning-based local descriptors changed everything. These were smarter, learning from faces to get better at telling them apart.

Then, in 2014, Facebook’s DeepFace and DeepID nailed it on the LFW (Labeled Faces in the Wild) benchmark, beating humans at identifying faces in wild, uncontrolled settings.

Since then,deep-learning-based approaches have been used to pick out features from faces. Massive face databases and super advanced techniques that keep getting better. Jump from 60% accuracy to over 97% on the LFW benchmark.

How does it work?

Facial recognition applications identify up to 80 nodal points on your face. Think of these points as tiny markers on key parts of your face, like the length or width of your nose, how deep your eye sockets are, or the shape of your cheekbones.

This tech snaps a pic of these points, turning your face into a digital ID card called a faceprint. This faceprint then becomes the go-to basis for comparison when the system tries to match you up with your picture or a video.

The facial recognition system is quick and spot-on under the right conditions. But if your face is partly hidden or you’re looking sideways, it might get a bit confused.

The chance of these algorithms messing up, known as false positive incidence, has been getting reduced, According to the National Institute of Standards and Technology (NIST), by half every couple of years since 1993.

Face recognition uses AI algorithms and ML (machine learning) to spot human faces among everything else in a photo or video. It starts by looking for the usual aspects of faces– eyes, eyebrows, nose, mouth, nostrils, and the iris. After that, it double-checks with a huge collection of images to ensure that it’s a human face.

Some systems focus on specific features, like your eyes or nose. Others use mathematical calculations and learnings from past data identification.

Face Recognition Operations

When it comes to Face Recognition Operations, there’s a variety of methods different systems use.

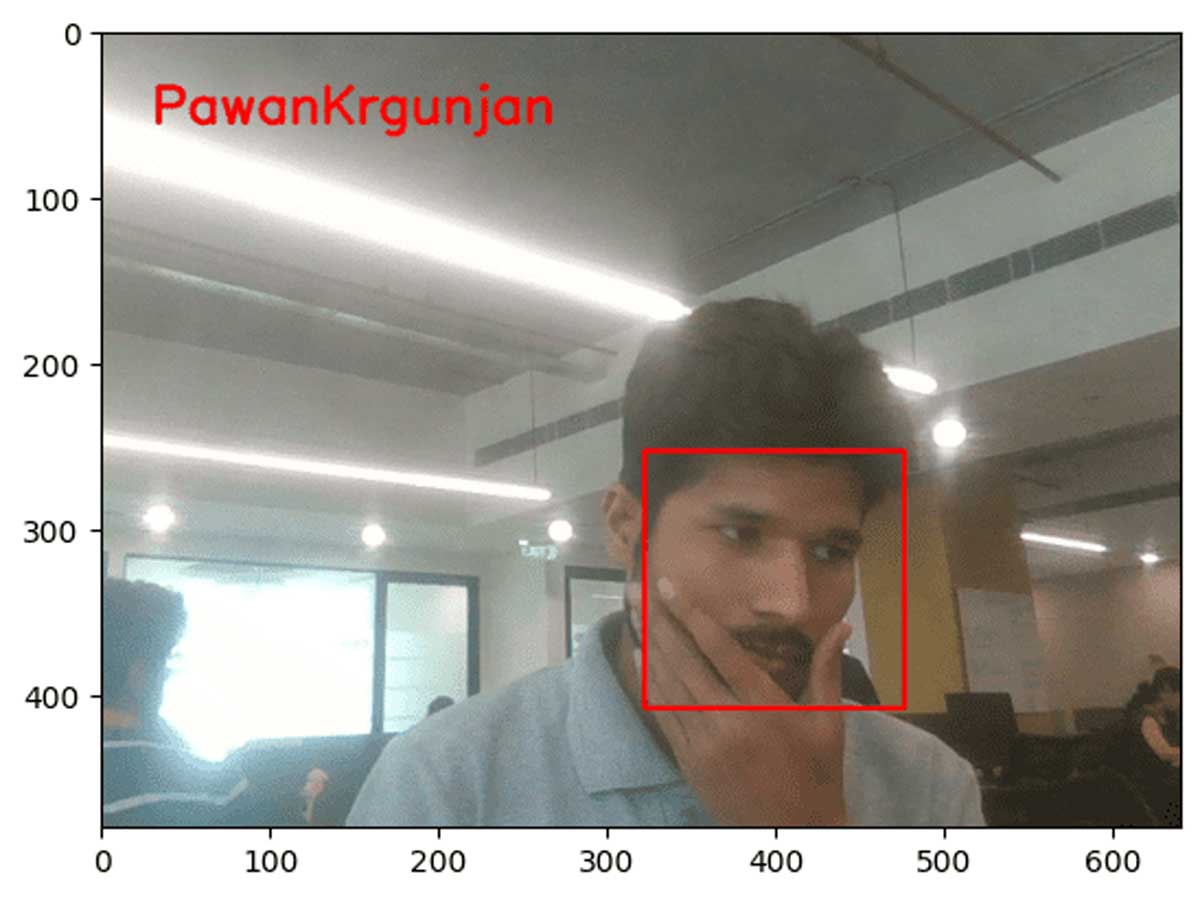

Face Detection: where a camera spots and recognizes a face. It works best when looking straight at the camera, but thanks to tech getting better, it can still figure things out even if your face is a bit tilted.

Face Analysis: Here, a photo of your face gets snapped and studied. Systems usually stick to 2D images instead of 3D because it’s easier to compare with their databases. They look at how far apart your eyes are or what your cheekbones are shaped like.

Image to Data Conversion: Your facial features get turned into a mathematical formula, making a faceprint. Just like everyone has unique fingerprints, everyone has unique faceprints, too.

Match Finding: Your faceprint gets matched against a database full of other faceprints. This database isn’t just random; it has photos with all sorts of ID info if there’s a match with your features. It finds and returns this match, complete with details like your name and address, depending on what’s stored about you in the system.

Popular Face Recognition Software

Face Recognition Software is making a big splash in today’s tech-savvy world. Companies are racing to create software that’s smart and reliable.

Deep Vision AI

Deep Vision AI is a well-known name among facial recognition software, gaining some seriously advanced computer vision technology. This is capable of turning everyday images and videos into valuable insights almost instantly. It’s not just about recognizing faces; it’s about understanding them and reacting in real time.

With its plug-and-play setup, it fits into any existing camera system or cloud setup. Plus, it’s very good for spotting people on watch lists with top-notch accuracy is pretty impressive.

Deep Vision AI is a big help in understanding customer behavior and ramping up security, all while playing nice with international data protection laws. Ii getting used to many sectors, from bustling cities to busy retail environments, and is even partnered with big names like NVIDIA and Amazon AWS.

SenseTime

SenseTime is incorporating AI with big data. They’re into image recognition and even smart video analytics. With tools like SensePortrait-S and SenseFace, they’re helping big players like Honda and Qualcomm make sense of a world filled with images.

Amazon Rekognition

Amazon Rekognition is another cloud-based algorithm that makes analyzing images and videos a breeze, even for those who don’t know the first thing about machine learning. It’s like having a smart assistant to identify everything from people to objects in your photos, making it a go-to for various applications.

FaceFirst

For those focused on safety and security, FaceFirst is a better option than others. It’s about creating a safer space, whether in stores, at events, or during your daily commute. They seamlessly blend into existing systems, making them a favorite in sectors like retail and transportation.

TrueFace

TrueFace is all about empowering you to understand what your cameras see. With an emphasis on diverse data, they ensure that their solutions work for everyone, no matter who you are. They’re big on privacy and speed, offering on-premise solutions that keep your data safe and your operations swift.

Face++

Face++ by Megvii is an open platform that uses AI to analyze faces with incredible accuracy. It’s so user-friendly that developers across the globe are using it to build applications that can do everything from managing traffic to aiding emergency responses.

Kairos

When it comes to ethical solutions, Kairos stands out. They offer both cloud and on-premises options, giving you control over your data and privacy. Their system is designed to scale, making face recognition a snap whether you’re checking one face or ten million.

Cognitec

Lastly, Cognitec is all about giving you the tools to create your own face recognition applications. Their FaceVACS Engine is flexible, easy to integrate, and meets the stringent requirements for ePassports. They’re making sure that quality and security go hand in hand.

Uses of facial recognition technology

Face recognition is everywhere, with its uses stretching across many areas.

- In the health world, apps like Face2Gene and software like Deep Gestalt are doing something pretty amazing. They can look at your face and check if you might have a genetic disorder by comparing your features with a database.

- Hospitals are using face recognition for hospital security, keeping track of patients way easier than old-school paper records. This tech helps staff quickly know who’s who and makes sure everyone there is supposed to be there.

- Another super cool use in hospitals is for detecting emotions and sentiments. This tech can figure out how patients are feeling during their stay, which is a big help for nurses and doctors to know who needs extra care.

- Mobile phone manufacturers use it to keep your phone secure.

- Airports, helped by The Department of Homeland Security, use it to keep tabs on visa stuff.

- Law enforcement is scanning mugshots to match faces to their databases.

- Social media sites, like Facebook, are using it to tag you in photos. Businesses are using it for security, like letting the right people into buildings.

- Marketers use face recognition to figure out the best audience for their ads, looking at things like age, gender, and ethnicity.

Security and privacy concerns

Regarding security and privacy concerns in the United States, there’s a grey area. Surprisingly, there needs to be a specific federal law that protects our biometric data.

This becomes a big deal when you realize that facial recognition systems are not just in sci-fi movies; they’re being used right now, especially in airport security. What’s more, it’s estimated that more than half of the U.S. population might already have their faceprints stored somewhere without even knowing it. That’s a lot of selfies in the system!

Problems and Challenges in Face Recognition Technology

In the world of face recognition systems, it can be challenging. Let’s break down the challenges they face.

- Pose, these systems are ok with a little head tilt here and there. But throw a big angle at them, and they might get stumped, especially if their database has only some of your angles.

- Then, we’ve got expressions. Our moods change, and so do our faces. Laugh, frown, or raise those eyebrows, and the system might get a bit confused about who you are.

- Aging is another tricky bit. Over time, our faces evolve. What you looked like at 20 is different from what you’ll look like at 60, which can throw these systems for a loop.

- Occlusion is a fancy word for something blocking your face. Got a new pair of specs, a bushy beard, or a stylish mustache? These can hide parts of your face, making it tough for the system to get a clear read.

- Lighting, or illumination, is a big deal, too. How light hits your face can change how you appear to the system, with different shadows and highlights. This is a challenge not just for machines but for us humans, too.

- Lastly, there’s the issue of identifying similar faces. Ever bumped into someone who looks just like a friend but isn’t? The system faces the same dilemma, sometimes struggling to tell apart people with lookalike features.

The scary part is this data can be stolen by hackers, spreading your personal info without you having a clue. It’s not just about someone stealing your profile pic; government agencies or advertisers could use it to keep tabs on where you go and what you do. What if the system gets it wrong and flags you for a crime you didn’t commit?

If you’re thinking of skipping the country to avoid this, the Department of Homeland Security basically says,– if you’re traveling internationally, your biometric data is going to be collected. Meanwhile, across the pond, Europe’s General Data Protection Regulation (GDPR) is taking steps to address these privacy issues.

But here’s the real kicker: the danger of automated blanket surveillance. Without clear laws or rules, we’re walking a thin line. It’s not just about protecting data; it’s about sticking to the principles of necessity and proportionality.

What is Face Anti-Attack?

Face Anti-Attack, it’s like a high-tech game of cat and mouse. As face recognition tech gets smarter, so do the challenges. We’re seeing a rise in sneaky adversarial machine-learning attacks.

Face Spoofing. Here, bad guys use printed photos or masks to fool the face recognition sensors. But the good news is devs have developed some clever defenses. They’ve developed a two-stream CNN that’s good at spotting these fake face giveaways. And that’s not all. They’re also using holistic depth maps to ensure the face in front of the camera has the right 3D shape.

To stay one step ahead, they’re fine-tuning neural networks with loads of real and fake images to teach them the difference.

Then there’s this thing called Adversarial Perturbation – it’s like adding a tiny, almost invisible tweak to a photo that completely throws off the recognition system.

One of the trickiest attacks is Template Reconstruction Attacks. Attackers use NbNet to rebuild a face image from its digital blueprint. It’s like piecing together a puzzle.

When these principles get ignored, our right to privacy takes a hit, and this could shake up our democratic political culture.In the world of face recognition systems, it can be challenging. Let’s break down the challenges they face.